March 6, 2026 — First episode

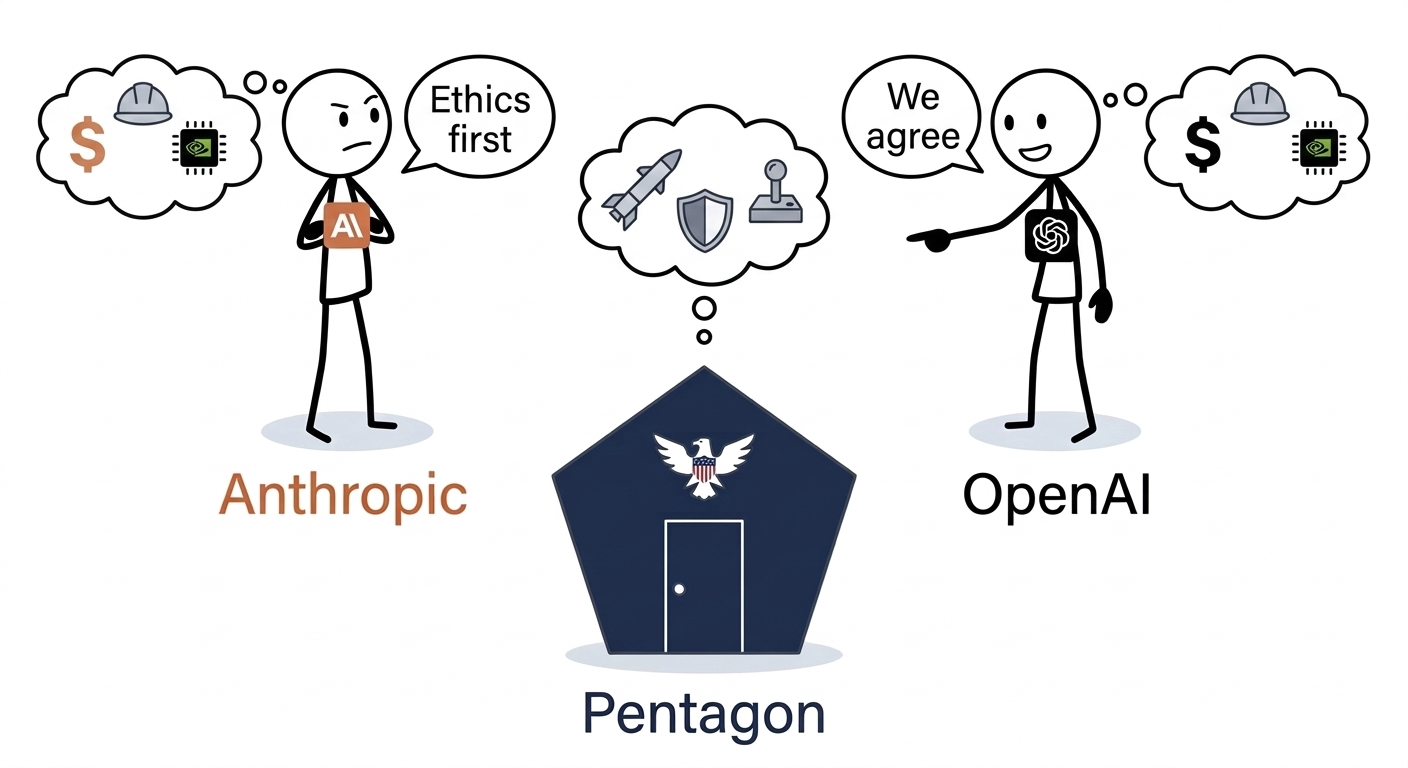

Pentagon. Anthropic said no. OpenAI said yes. It probably changes nothing

This week I followed in real time an affair that took me much longer than expected to untangle. On Twitter, Dario Amodei and Sam Altman were posting their positions live. Employees were signing open letters. Millions of users were canceling their subscriptions. And the CEOs of two of the most important AI labs in the world were calling each other liars — in public.

At first glance: an ethical conflict between two companies.

On closer inspection: something more structural, older, and more interesting.

Here’s what I make of it.

What this newsletter is trying to do

Before diving into the facts, a note on what we’re trying to accomplish here.

This is not a news summary. There are plenty of those.

The goal is to reason from first principles: start from verifiable facts, look for second and third-order consequences — what isn’t being said, why things are happening the way they are, what it reveals about the deeper mechanics. And draw frameworks that are useful in everyday life and business.

Because what’s at stake here goes far beyond OpenAI and Anthropic.

Act 1 — The players (2021–2024)

To understand this affair, you have to start two years back.

Anthropic was founded in 2021 by Dario Amodei, his sister Daniela, and a dozen engineers — all former OpenAI employees. Official reason: deep disagreements about the direction their former employer was taking on AI safety.

From the start, Anthropic positioned itself differently. They published a document called “Constitutional AI” — an approach to aligning models on explicit values drawn from sources like the Universal Declaration of Human Rights. In January 2026, they published a formal “Constitution” for Claude — an ethical framework written with philosophers, establishing a clear hierarchy: safety > ethics > internal guidelines > helpfulness.

Serious stuff on paper. And rare in the industry.

OpenAI, for its part, followed the inverse trajectory. Founded in 2015 as a nonprofit, it progressively transformed into a commercial company — with a valuation that would reach $730 billion by 2026. Their model spec exists too. It’s more prescriptive, less philosophical. And recently: advertising integrated into ChatGPT’s free tier — a clear signal on the commercial direction taken.

On the ground of everyday use, reality is the opposite of what you might expect. ChatGPT is today one of the most restricted AI tools in practice: layers of filters, frequent refusals whenever a subject becomes sensitive, stacked safety metaprompts. The more you use it on complex cases, the more you hit the guardrails.

Claude Code, on the other hand, is a tool built for developers — it runs directly in the editor, with no filter overlay, no mid-session blocks. That’s precisely why it’s been massively adopted by professional developers. The most “open” AI in practice comes from Anthropic, not OpenAI. This paradox will matter later.

June 2024: Anthropic becomes the first frontier lab to deploy its models on classified US government networks — via a partnership with Palantir. No ideological resistance to working with defense. A signed contract, deployed teams.

July 2025: the contract is formalized at $200 million. The Pentagon explicitly accepts Anthropic’s Acceptable Use Policy — including restrictions on autonomous weapons and mass surveillance. Both parties signed knowing what each was laying down as conditions.

Act 2 — The regime change (January 2026)

The Trump administration arrived with a different vision of military AI.

January 2026: Pete Hegseth, new Secretary of Defense, publishes an AI strategy memo for the DoD. He requires all AI contracts to include an “any lawful use” clause — unrestricted access to any legal use. No carve-outs. No negotiated exceptions.

For Anthropic, this is directly incompatible with their Acceptable Use Policy. They have an active contract that says the opposite.

Negotiations begin.

Act 3 — The week of February 24 (the full sequence)

Monday Feb 24: The Pentagon sets an ultimatum. Anthropic must accept the “any lawful use” clause or lose the contract. Hegseth personally meets Dario Amodei.

Wednesday Feb 26: Dario Amodei posts on Twitter. Calm tone, firm position: “Pentagon threats don’t change our position.” He details what Anthropic refuses: fully autonomous weapons, mass surveillance of American citizens. He clarifies that Anthropic is willing to support any military use that is legal and human-supervised.

The same day, Sam Altman also posts. He says he shares the same red lines as Amodei. He seems to be supporting Anthropic’s position.

Thursday Feb 27, 5:01 PM: The deadline passes without agreement.

Thursday Feb 27, evening: Trump signs an order banning federal agencies from using Anthropic products. Hegseth officially designates Anthropic a “national supply chain risk” — a designation traditionally reserved for foreign adversaries, never for an American company.

Thursday Feb 27, same evening: OpenAI announces a deal with the Pentagon to deploy its models on classified networks. Sam Altman had said he shared the same red lines. He signed anyway.

Thursday Feb 27, same day: OpenAI also announces the closing of a $110 billion fundraise — Amazon ($50B) + Nvidia ($30B) + SoftBank ($30B). The largest private raise in history. Valuation: $730 billion.

Three major events. One single day.

Act 4 — The reaction (Feb 28 – March 5)

Feb 28: ChatGPT uninstalls jump 295% in 24 hours. The app’s normal churn is 9%. One-star ratings on stores surge 775% in a single day. Claude surpasses ChatGPT and becomes the most-downloaded free app in the US on the App Store.

March 1–2: The #QuitGPT movement gains momentum. Within days, 2.5 million people have either canceled their ChatGPT subscription, signed a pledge, or publicly announced their switch.

March 3: Sam Altman publicly acknowledges: “it looked opportunistic and sloppy. We shouldn’t have rushed.” He announces renegotiating the contract to explicitly inscribe restrictions on surveillance and autonomous weapons — the same restrictions Anthropic was demanding.

Also March 3: In an internal note that leaks, Sam Altman tells his teams that “operational decisions” on model usage belong to the government. Subtext: OpenAI provides the technology, not the rules of use.

March 4: OpenAI employees sign an open letter in support of Anthropic. In an internal memo that leaks, Dario Amodei says OpenAI accepted the deal “because they wanted to calm their employees, we actually wanted to prevent abuses.” He calls OpenAI’s communications “straight up lies”.

March 5: Anthropic is back at the negotiating table with the Pentagon. Dario Amodei meets Emil Michael, Under Secretary of Defense for Research and Engineering.

What the dominant narrative hides

The simple narrative: an ethical duel. Virtuous Anthropic, opportunistic OpenAI.

It’s clean. It’s readable. It’s incomplete.

Here are four complementary readings.

Reading 1 — Red lines as HR strategy

In a market where AI engineers are rare and highly sought after, positioning yourself as “the lab that holds its principles even under government pressure” is worth millions in talent attraction.

It’s no coincidence that Anthropic ran ads during the 2026 Super Bowl — titled “Betrayal”, “Deception”, “Treachery”, “Violation” — direct attacks on OpenAI. Result: +11% active users for Claude in the week. The primary target wasn’t the general public. It was the engineers watching.

Refusing the Pentagon loudly sends a strong signal: we do what we say, even when it costs. It’s a promise to those who want to believe a coherent employer exists. Young engineers coming out of school with their convictions intact, who choose Anthropic to “not join the evil OpenAI” — they’re exactly the target of that signal.

The problem with this reading: it doesn’t mean Amodei is cynical. He can sincerely hold his positions AND be aware it serves recruiting. The two aren’t mutually exclusive.

Reading 2 — This was perhaps primarily a commercial negotiation

Anthropic had a $200 million contract with the Pentagon. The dispute wasn’t “work with the military or not” — they’d been doing it since June 2024. The dispute was about renewal terms.

Saying “our red lines don’t change” is a version of the truth that presents well. But “they don’t pay enough for the level of guarantees they’re asking for” is probably also true — and less elegant to say publicly.

The fact that Anthropic came back to the table on March 5 — and that the terms renegotiated by OpenAI now include the same guarantees Anthropic was demanding — suggests Anthropic’s position wasn’t untenable. It was just uncomfortable for everyone.

Reading 3 — The lock-in game

AI integrates into corporate and government systems. The migration cost of a model in classified infrastructure is enormous: validation, certification, training, connectors, rebuilt workflows.

Defense companies that had been deploying Claude since 2024 don’t want to reconfigure everything. Anthropic knows this. Leaving without negotiating means abandoning that leverage and ceding ground to OpenAI — which will then be deeply integrated into tomorrow’s systems. Being present in the software running in ten years is the real competitive game in B2B AI. Not consumer subscriptions.

Reading 4 — The irony of guardrails

There’s something structurally paradoxical about this affair.

ChatGPT is today the quintessential consumer AI — but also one of the most restrictive in practice. Layers of filters, frequent refusals whenever a subject becomes sensitive, safety metaprompts stacked on top of the model. The more you use it on complex cases, the more you hit the guardrails. OpenAI even integrated advertising into the free tier — a clear signal on commercial direction.

Claude Code works differently. No filter overlay on top of the model. No blocks in the middle of a development session. Directly in the editor — it’s a real work tool, made for developers, not for mass consumption. That’s precisely why it’s been massively adopted by professional developers.

And that’s where the irony lies.

The most “open” AI in practice — the one that allows you to do the most, not bridled for mass consumption — comes from Anthropic, the lab that refused to sign with the Pentagon without guarantees on autonomous weapons.

The most filtered AI in daily use comes from OpenAI, which just signed without those guarantees.

Ethics documents don’t predict behavior well under real pressure. And the restriction visible to the user doesn’t reflect the guardrails that actually matter — those that apply to military use, classified data, government access.

What matters is what a company does when it really costs.

The root problem — The state always wins in the end

This isn’t cynicism. It’s recent history.

2006 — AT&T and the “splitter rooms”: AT&T engineers installed NSA surveillance equipment in their network nodes — under legal compulsion, with total prohibition against informing their management. This isn’t a rumor: the EFF documented the case, internal documents leaked. This is the mechanism of FISA Section 702 with a total gag order: an employee can be individually compelled to cooperate, without the CEO being in the loop, and without being able to tell anyone — including colleagues.

2013 — PRISM: Snowden reveals the NSA was collecting digital communications with the cooperation of Google, Apple, Microsoft, Facebook. Legally compelled cooperation. Impossible to disclose.

2016 — Apple vs FBI: Tim Cook publishes an open letter. Big public debate. Apple “wins” — the FBI finds another technical solution. The underlying question remains open.

2022 — Twitter Files: Years of communications between Twitter and the FBI reveal on-request moderation, account suspensions. Twitter was cooperating. Silently.

The legal tools exist: CLOUD Act (2018) requiring American tech companies to provide data abroad on court order, National Security Letters with total gag orders, FISA Section 702 for warrantless access.

Anthropic can refuse a commercial contract with the Pentagon. Its employees cannot refuse a FISA order.

And everyone in Silicon Valley knows this — executives, senior engineers, specialized lawyers. It’s a structural reality of the American tech industry since at least 2001.

The creator’s dilemma — this isn’t new

This week, several people mentioned a book that Anthropic employees receive when they join: “The Making of the Atomic Bomb” by Richard Rhodes (1987, Pulitzer Prize). A deliberate choice by management.

The book traces the creation of the atomic bomb by the physicists of the Manhattan Project. Brilliant people, often motivated by defensive reasons — build the bomb before the Nazis. People who believed, for the most part, they were working for good.

In 1945, Leó Szilárd — who had co-signed the letter to Roosevelt that launched the Manhattan Project — organized a petition of researchers to prevent the bomb from being used on Japanese cities without prior warning.

The petition was classified by the military. It reached Truman after Hiroshima.

Szilárd had helped create the very thing he now wanted to stop. He had built the technology. He had no control over its use.

The structure of the problem is identical. We create something powerful. We negotiate guardrails. We believe the guardrails will hold. And we discover — sometimes too late — that they’re negotiable.

Giving this book to new hires is a way of saying: you know what you’re building. So do we.

What I take from this — actionable insights

On corporate consistency: Ethics documents are only worth what a company is willing to pay to uphold them. The true measure of a position is the cost one accepts to maintain it. Anthropic lost a $200 million contract and got blacklisted by the American administration. That’s a concrete measure.

On reading corporate communications: When a company publishes a strong ethical position in the context of a commercial crisis, ask yourself: what’s the secondary interest of this communication? Recruiting? Competitive differentiation? Indirect negotiation? Both can be true simultaneously. A CEO tweet is never spontaneous — the teams at these companies know exactly how crowd psychology works.

On the question of “where to work”: Young engineers who choose Anthropic to “not join the evil OpenAI” are making an understandable choice. But the structural outcome is probably the same. States can access what they want, when they want, through legal channels that have existed since 2001.

Third-order consequence: If governments can force AI labs’ hands whenever they decide to, then the real lever of AI ethics isn’t in private companies. It’s in regulation — national legislation, international treaties, open technical standards. And there, nobody is really moving.

On the attack/defense dynamic: It’s the same game as in cybersecurity. An AI to attack, an AI to defend, a more powerful AI to attack again. We develop weapons, then defenses, then new weapons. This structure has always existed in military and technological history. What changes is the speed of iteration.

Next time

This newsletter is the first episode. In the next ones, we won’t need to retrace the entire timeline — we’ll start from where we left off.

What I’m interested in following:

- Under what conditions does Anthropic ultimately sign?

- Does OpenAI hold to its renegotiated guarantees?

- And the fundamental question: can an AI model be used to target military strikes — in Iran, as reportedly already happened — within current contractual guarantees?

Sources: Full timeline — TechPolicy.Press · MIT Technology Review · CNBC Mar 5 · TechCrunch — “straight up lies” · CNBC — Altman “sloppy” · IBTimes — 295% uninstalls · CNBC — Super Bowl +11% · TechCrunch — $110B · EFF — NSLs · CNBC — Claude used in Iran · Constitutional AI — Anthropic

Reference book: Richard Rhodes, “The Making of the Atomic Bomb” (1987)