Poiesis, praxis, and the tasks that switch sides

What 2,500 years of philosophy tells us about what will survive AI

Tuesday morning. A manager receives an email from leadership: his team of 6 is being cut to 3. No restructuring plan, no press release. The other 3 will leave gradually — they just won’t be replaced. The official reason: “process optimization with new tools.”

Two years ago, this scenario looked like the dominant model: a silent reduction, by attrition, without visible friction. AI was nibbling at tasks at the margins. Companies adapted quietly.

That model is obsolete.

Since 2025, the major tech companies — the ones with early access to the most powerful models — have stopped pretending. The numbers are public, signed, and owned:

- Klarna reduced its workforce by 40% over two years. Their AI now does the work of 700 people, and the CEO said so himself on CNBC in 2025, with the casual tone of someone announcing a good quarter.

- IBM: 8,000 HR positions eliminated by AI.

- Microsoft: 15,000 positions cut in two waves between May and July 2025. Satya Nadella stated that AI now writes 20–30% of the company’s code, and that “the headcount we grow will grow with a lot more leverage than the headcount we had pre-AI.”

- Amazon: Andy Jassy published an internal memo in June 2025 explicitly stating that AI “will reduce our total corporate workforce in the coming years.” Followed by 14,000 then 16,000 positions cut in October 2025 and January 2026.

- Oracle: between 20,000 and 30,000 positions currently being eliminated — 12 to 18% of the workforce. Larry Ellison stated that AI allows the company to “build more software in less time with fewer people.” Meanwhile, Oracle is investing $50 billion in AI data centers this year.

- Duolingo announced in April 2025 that it would “gradually stop using contractors to do work that AI can handle.” CEO von Ahn published the memo publicly.

- Chegg, the homework help company, cut 45% of its workforce in October 2025 — not because it automated its processes, but because its customers stopped paying: they use ChatGPT instead. This is no longer internal automation. It’s demand destruction.

- UPS: 48,000 positions eliminated in November 2025. Not tech — physical logistics. “AI allows us to move more volume with fewer workers.”

Tech always leads. What is happening at Microsoft and Oracle today will reach SMEs in two years. This isn’t a prediction — it’s the historical pattern of every previous technological wave, and nothing suggests this one will be different.

And in January 2026, Dario Amodei, the CEO of Anthropic — the same company that builds Claude — publicly warned that AI would cause “unusually painful” disruption in the labor market, with 50% of entry-level white-collar positions at risk within one to five years. The WEF puts that at 41% of global employers planning to reduce their headcount by 2030, and Gartner predicts that 40% of enterprise applications will incorporate autonomous AI agents by end of 2026 — compared to less than 5% in 2025.

The classic model was right — and it’s becoming wrong

For ten years, the reassuring narrative on AI and employment rested on a foundational study: Frey & Osborne (Oxford, 2013) estimated that 47% of American jobs were at risk of automation. That figure circulated everywhere, sometimes to alarm, sometimes to reassure.

What got cited less: when the OECD redid the analysis in 2016, not by occupation but task by task across 21 countries, the number fell to 9%. The gap between 47% and 9% isn’t a contradiction — it’s a demonstration. What automates is the routine portion of each position. Not the entire job. An accountant doesn’t disappear: their data entry disappears. Their interpretation of an anomaly in front of a client in crisis, no.

This model had a solid logic, built on an implicit assumption: AI automates isolated tasks, one at a time, in a flow supervised by a human. And that assumption was correct — until it wasn’t.

The autonomous agents of 2025-2026 no longer reason at the task level, they reason at the workflow level. Claude Code can work for five hours without interruption on an entire project — read the codebase, plan, write, test, fix, open a pull request. OpenAI Operator books flights, manages complaints, fills out forms end to end. Salesforce Agentforce eliminated 4,000 support positions by replacing them with agents that handle customer requests from A to Z.

AI no longer replaces the task. It replaces the process.

The second-order consequence is significant: even professions the classic model considered “protected” have entire workflows that are becoming automatable. The radiologist who reads 200 images a day is a target. The radiologist who announces a diagnosis to a family and decides on a treatment protocol under uncertainty, no. The distinction is no longer between professions — it’s within each profession.

Aristotle already had the answer

2,500 years ago, Aristotle distinguished two fundamentally different modes of human activity.

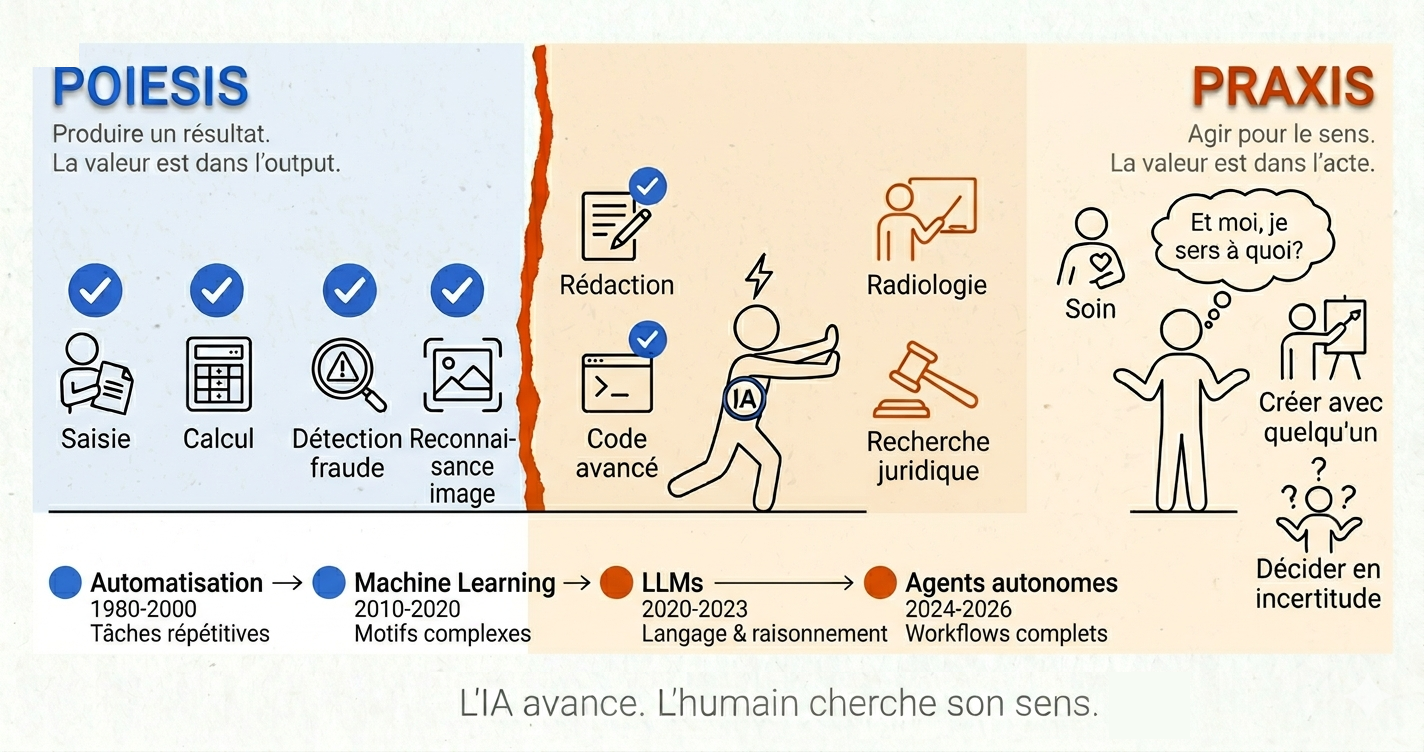

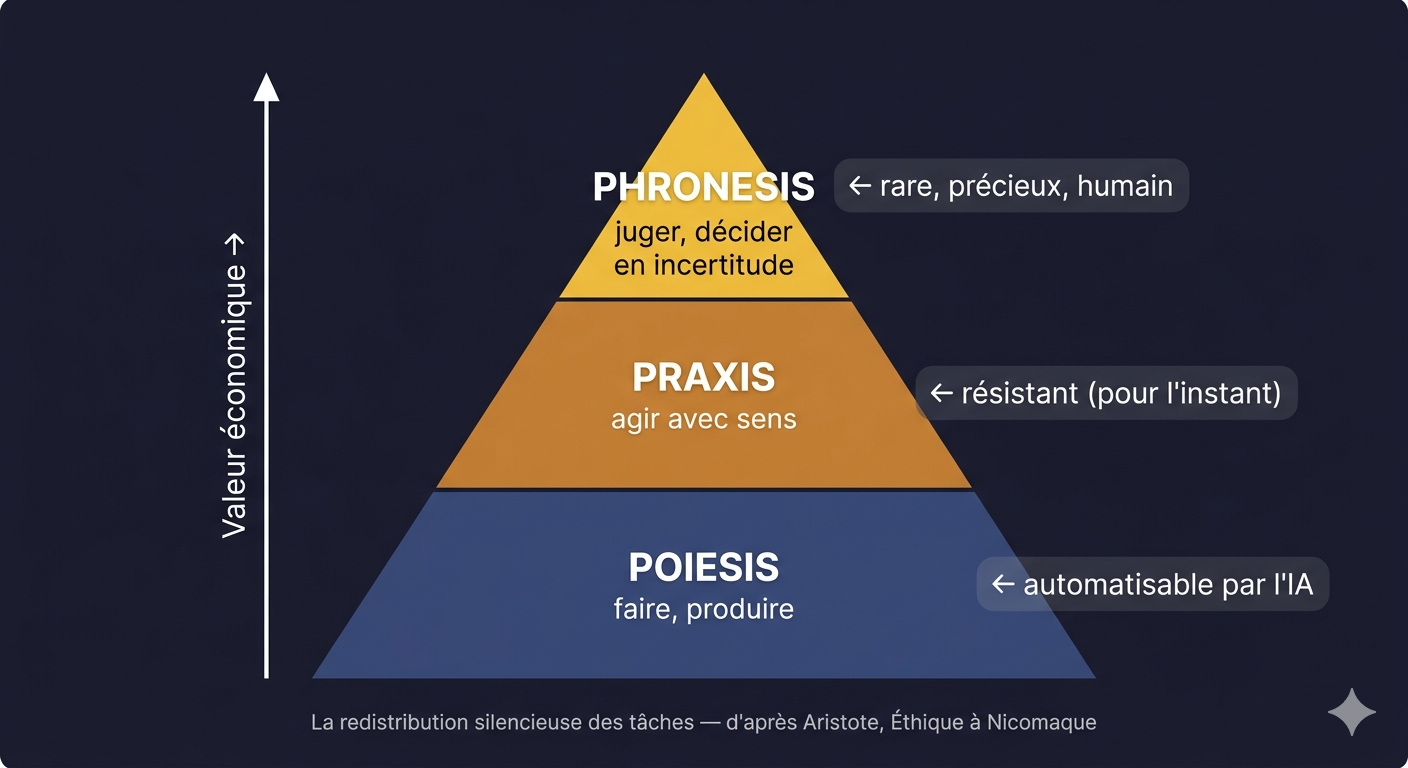

Poiesis — from the Greek poiein, to make, to produce — refers to any action oriented toward an external object. A report, an analysis, code, a meal. The value is in the result, not in the act itself. Once the result is obtained, the activity has no further reason to exist. Poiesis is a means to an end.

Praxis — from the Greek prassein, to act — refers to action whose value resides in the action itself. Healing, deliberating, teaching, creating with someone. The activity is its own end. One doesn’t heal in order to have healed. One heals because the act of healing is itself what matters.

“Action has its end in itself, while production has its end in an object separate from the activity.” — Aristotle, Nicomachean Ethics

AI automates poiesis. It excels at it, it’s often superior. What it cannot automate is praxis — because praxis isn’t defined by its output. It’s defined by who acts and how that action transforms what happens between the people involved.

An AI agent can write a medical report more accurately than a doctor. It cannot be the doctor who looks the patient in the eyes and announces something irreversible. It’s not that it lacks technical competence — it’s that the value of this act is inseparable from the human presence that accomplishes it.

Viktor Frankl — neuropsychiatrist, concentration camp survivor, author of Man’s Search for Meaning (1946) — formulated this under extreme conditions. When everything had been stripped from a prisoner — his possessions, his identity, his physical freedom — what remained was the capacity to choose one’s attitude toward what was happening. This inner freedom isn’t an abstract philosophical consolation. It’s the operational definition of praxis: the value of a human act that cannot be delegated because it is constitutive of the identity of the one who accomplishes it.

Hannah Arendt, in The Human Condition (1958), formulated a warning that no one took seriously at the time: a society freed from routine work by automation could find itself with an empty freedom — people whose entire identity had been built around production, without the cultural and existential resources to inhabit the freedom that automation offers them. This isn’t a rhetorical paradox. It’s a prediction that is beginning, very concretely, to materialize.

In 2026, I work at a large company alongside my personal projects. The position is particular — not uncomfortable, but clear-eyed. I made a deliberate choice to be on the front lines: building systems, adopting AI, becoming the reference on the subject in my environment, precisely to avoid finding myself on the receiving end. Even before autonomous agents, when I joined my current role, I had automated processes that took two weeks and brought them down to two days. In doing so, I posed the question myself, without quite voicing it out loud: does this still justify a full-time position on these tasks?

I see people missing the train — avoiding the subject, hoping it will pass. I understand the instinct, but I know what it leads to. The poiesis/praxis distinction is therefore not for me an abstract category — it’s the filter through which I decide, very practically, where to invest my energy.

Four ruptures that changed which side tasks are on

This slippage isn’t new. It happens in waves, each time a technological rupture makes codifiable what wasn’t.

1980-2000 — Automation and scripts

The first tasks to tip over are the most mechanical: data entry, calculation, sorting, filing, batch processing. As soon as a task can be expressed in deterministic rules, it tips.

2010-2020 — Machine Learning

Machine learning crossed a different threshold: tasks that aren’t expressible in explicit rules, but that have patterns detectable in data. Fraud detection, image recognition, recommendations, diagnosis on structured data. ML learned to extract these patterns without anyone knowing how to formulate them explicitly.

2020-2023 — LLMs

Large language models tipped over tasks related to language and discursive reasoning: writing, synthesis, translation, text analysis, answering complex questions, code generation. Tier-1 customer support, documentary research, writing of standard reports: tipped.

2024-2026 — Autonomous agents

This is the rupture in progress. Autonomous agents don’t do one task at a time in a supervised flow. They execute complete workflows — sequences of actions, intermediate decision-making, adaptation during execution. What this rupture makes codifiable: everything that was protected not by the complexity of an isolated task, but by the complexity of the sequence — the continuity of judgment that only a human could ensure. Agents have that continuity.

What doesn’t tip yet: tasks whose value depends on the radical unpredictability of the situation and the physical and relational presence of the human.

The question to ask for each task in one’s own position is therefore not “is this complex?” but “is this codifiable by one of these four methods?” If so — it will eventually tip.

The tasks that switch sides

The poiesis/praxis distinction holds. What changes is the classification of tasks.

This isn’t a frontier that advances. It’s a repertoire that rewrites itself. A task can be in praxis for decades — because it requires judgment, presence, a form of contextual intelligence — then slip into poiesis the day an AI learns to do it as well, or better. The movement isn’t spatial. It’s categorical.

This was true before AI. Industrialization did exactly this with artisanal work: weaving, forging, assembling were praxis in the sense that mastery of the gesture defined the craftsman. The machine transformed these acts into poiesis — separating them from the identity of the one who performed them. AI is doing the same thing, but in domains we thought were immune.

Legal research was praxis for the junior lawyer — it mobilized their judgment, their legal culture, their ability to navigate ambiguity. Harvey AI does it better and faster today, not because the law has become simple, but because this part of the law is now codifiable: it slipped in the LLM wave. Complex architecture coding, long the preserve of the senior developer, follows the same path with autonomous agents: Claude Code works for five hours on an entire project without supervision. Diagnostic radiology — a medical act charged with responsibility, training, accumulated experience — sees vision models surpassing human radiologists in detecting certain cancers since the Machine Learning wave. It slips too.

What these examples have in common: they aren’t simple tasks. They’re codifiable tasks — meaning you can extract the rules, the patterns, the success criteria, and teach them to a model. This is the codifiability threshold: once a task crosses it, it will eventually slip. The question isn’t if. It’s when.

The silent reduction — what no one says

No one was laid off. The positions just ceased to exist.

Oracle’s, Amazon’s, and Microsoft’s layoffs make headlines. They’re spectacular, quantified, and owned by executives who sign public memos. But they represent only part of the phenomenon — the visible part.

There’s another mechanism, just as consequential but without the noise, affecting millions of organizations that will never make the front page of TechCrunch. Not announced eliminations. Directed attrition: companies document processes, build AI companions, “augment” teams — and then don’t rehire when someone leaves. One person does the work of three. Headcount shrinks without restructuring plans, without union alerts, without media coverage.

The mechanism is so discreet that social protections don’t see it — they were designed for layoffs, not for non-replacement.

The second-order consequence is troubling: the “system builders” — those who document processes, who build AI companions, who are praised for it — involuntarily participate in creating the conditions for their colleagues’ replacement. It’s not malice. It’s local rationality.

I experience this from the inside. I’ve automated part of my own tasks by building AI tools for my personal use — a system that captures, classifies and updates my work ideas in real time. This kind of initiative is valued in most large companies. What no one says out loud: that’s exactly why a position isn’t refilled when someone leaves.

MIT Sloan Management Review (2023) documented this mechanism under the name “directed attrition”: companies that adopt AI don’t lay off — they don’t replace departures. McKinsey (2023) confirms: “augmented” teams maintain their headcount stable in the short term, then compress as departures aren’t compensated.

The identity crisis that’s coming

There’s an angle we haven’t named yet and it may be the most important in the long run.

In industrial and post-industrial societies, personal identity is massively built around work. What do you do for a living? is the central question of any encounter. An accountant doesn’t just count — they are an accountant.

When AI takes these tasks — not the entire profession, but the part that structured daily life and created the sense of competence — something breaks. Not the position. The identity that was attached to it.

Researchers at MIT documented this phenomenon under the name AI identity threat (2022): workers whose AI absorbs the characteristic tasks of their profession experience an erosion of their professional identity well before their position is threatened. The accountant whose AI automatically reconciles accounts isn’t laid off. But they gradually lose what gave them the feeling of being good at their work.

A 2025 study published in Nature Scientific Reports (N=269) showed something striking: passive use of AI — copy-pasting generated content without engaging in the work — significantly reduces perceived meaning at work, self-confidence, and the sense of ownership over what one produces. It’s not AI that destroys meaning. It’s passive delegation.

Hannah Arendt had anticipated this crisis: a society that has solved the problem of work but hasn’t solved the problem of the meaning of work finds itself with individuals freed from a constraint without the resources to inhabit that freedom.

The next decade will produce millions of people in this situation: their position still exists, their routine tasks have disappeared or are disappearing, and no one taught them to build an identity on anything other than what they produce.

What resists: the practical filter

Faced with this, the question isn’t “will my profession survive?” It’s more precise.

For each recurring task in your position, ask yourself: if an AI did exactly this in my place, would the result be identical for the person receiving it?

I built a tool to make this analysis systematic: the AI-Proof Job Scanner analyzes a position task by task and calculates a resilience score against automation. The methodology comes directly from this framework.

If the answer is yes, it’s poiesis — automate it, delegate it, and above all stop defending it as human added value, because it no longer is.

If the answer is no, it’s praxis — develop it, deepen it, put it forward; that’s where your value becomes structurally difficult to replace.

| Activity | Resistance | When it slipped | Reason |

|---|---|---|---|

| Data entry, routine accounting | Near zero | Scripts (1980-2000) | 100% codifiable from the start |

| Tier-1 customer support | Low | LLMs (2020-2023) | Scripts + NLP |

| Radiology (image reading) | Low-medium | ML (2010-2020) | AI surpasses human accuracy |

| Medicine: therapeutic relationship | High | — | Presence, shared uncertainty |

| Psychological therapy | Very high | — | Intersubjectivity, trust |

| Teaching (factual content) | Low-medium | LLMs + Agents | AI adapts better to individuals |

| Teaching (mentoring, identity) | Very high | — | Presence, modeling, adjustment |

| Lawyer (legal research) | Low | LLMs + Agents | Harvey AI already dominates |

| Lawyer (pleading, negotiation) | High | — | Judgment, persuasion, relationship |

| Grief work, palliative care | Extremely high | — | Irreplaceable presence |

| ”Average” creativity | Medium | LLMs (2020-2023) | AI surpasses average human — for now |

| Exceptional creativity | High (uncertain) | To watch | Top 10% humans still far ahead, but the gap is closing |

Before reacting to these figures, avoid the symmetric error: neither deny what’s happening, nor conclude that employment apocalypse is coming. Employment functions like an elastic band — Twitter/X cut 80% of its workforce under Musk, then started rehiring for different profiles. What disappears is the value of certain skills in certain contexts, not demand for human work in general.

Economics gives us a framework to understand why. In finance, the notion of price elasticity measures a good’s resistance to substitution: an inelastic good — oil, insulin — sees its demand remain stable even when the price rises, because no functional equivalent exists. An elastic good collapses as soon as a cheaper competitor appears. Skills obey the same laws: a praxis skill is inelastic by definition — its value depends precisely on the fact that no agent can reproduce it identically. A codifiable poiesis skill is elastic — as soon as a model does the same thing more cheaply, demand for the human version disappears.

Skills obey the same laws as prices: what is substitutable will eventually be substituted.

The answer isn’t to resist. It’s to move up a level — from doing to directing, from producing to deciding why we produce, from executing to validating with judgment.

But we need to name a limit that the figures don’t anticipate well: “moving up a level” assumes you’ve already climbed the first rungs. Entry-level positions didn’t just serve to execute tasks — they served to train. A junior lawyer who does legal research for two years isn’t just learning to search: they’re learning to read the law, to navigate ambiguity, to develop the judgment that will make them a senior. If Harvey AI does this research on their behalf from day one, what exactly are they learning?

Recent studies confirm the intuition: students who learn without AI retain significantly better than those who rely on it passively. The iterative loop of error-correction-understanding is the mechanism for forming praxis. AI can accelerate it if used well — but if it executes in one’s place, it short-circuits precisely what it’s supposed to accelerate. The medium-term risk: an economy with senior positions and no one trained to fill them.

The most exposed positions are also entry-level ones — those that allowed people without university credentials to build progressive expertise. If these rungs disappear, it’s a question of social mobility as much as individual retraining.

Made by Human — and then what?

When factories relocated in the 1980s-2000s, a label appeared in reaction: Made in France, Made in Italy — proof of origin as a marketing argument, human and geographic traceability as added value in a world of optimization. AI will probably provoke something analogous. For part of the population, knowing that a text, a work, a piece of advice was produced by a human will become a signal of value in itself — not because the result is necessarily better, but because the relationship and engagement behind it are different.

I’m myself in an ambiguous position on this point: I use AI massively to produce, and I fully own that. What I do with it, I couldn’t do without it — not at this pace, not at this quality. But I direct, I decide, I validate, I bring the perspective. Is this Made by Human? Probably a third category that doesn’t yet have a name.

From Made in France to Made by Human — we've moved from the place of production to the identity of the producer.

This shift raises a question that Aristotle’s first two terms don’t fully resolve: what is it, exactly, to direct an AI? Neither making, nor acting — something third. Aristotle, here too, had a word.

The third economy — phronesis

Perhaps Aristotle is missing a third term.

What is it to write an article by directing an AI? It isn’t poiesis — I’m not producing the text word by word. It isn’t pure praxis — the execution is delegated. It’s something intermediate: the intention remains human, the judgment remains human, but the gesture is distributed between the human and the machine.

Aristotle had a term for this too: phronesis — practical wisdom, the capacity to decide rightly in complex situations without pre-established rules. Not making, not acting — judging. Directing without executing. Conceiving without producing oneself. Naval Ravikant has a modern version of this in what he calls Judgment: being paid for one’s decisions, not one’s time — because good judgment applied to leverage produces exponential results. This is exactly what AI makes possible at scale: the leverage exists, it awaits judgment.

Phronesis isn’t a starting point. It’s built on years of accumulated praxis — which is why short-circuiting entry-level positions is risky: one doesn’t develop judgment by skipping execution, one develops it through execution, until execution becomes unnecessary.

To enter praxis, you must have passed through poiesis. To enter phronesis, you must have exhausted praxis.

Each level presupposes the one below it:

- Phronesis presupposes praxis: you cannot judge under uncertainty without first knowing how to act with meaning — good judgment operates within action, not above it.

- Praxis presupposes poiesis: meaningful human action always unfolds in a material world that must first be built.

In Aristotle this is explicit — phronesis is the virtue that governs praxis, it cannot exist without it. This isn’t an abstract hierarchy of value, it’s a hierarchy of dependency.

What Aristotle really defined with these three terms is a hierarchy of what human beings can entrust to something other than themselves. Poiesis is delegable — to a slave, to a tool, to a machine. Praxis is not: it implies the character of the one who acts. Phronesis even less so: it’s the capacity to judge rightly in situations where no rule is sufficient.

Aristotle didn't foresee Claude. But he had already anticipated what we would delegate to it.

This is perhaps the third economy taking shape: not that of those who make (poiesis, automatable), not that of those who act with presence (praxis, irreplaceable in human context) — but that of those who judge with enough competence and context to direct what agents execute.

Developing phronesis: a sketch of a method

Phronesis isn’t decreed, it’s built — and this may be the most important practical challenge of the next decade for anyone who wants to remain relevant. A few leads, non-exhaustive.

Map your tasks. For each recurring activity, ask honestly whether it falls under poiesis (executable, delegable) or praxis (irreducibly human). Not to reassure yourself — to decide where to invest your attention. The AI-Proof Job Scanner is a tool I built for exactly this: analyzing a position task by task and calculating a resilience score.

Don’t skip steps. Use AI to accelerate learning, not to replace it. The error-correction-understanding loop is the mechanism for forming judgment — delegating execution before having mastered what you’re delegating means optimizing speed at the expense of competence. You can arrive somewhere quickly without knowing where you are.

Accumulate traced decisions. Judgment forms through the experience of past decisions and their consequences. Keep a log — not of tasks, but of decisions and their results. This is what Naval Ravikant calls the Judgment Track Record: the reputation for deciding well builds over time, and it doesn’t build without a trace.

There’s probably a more complete method to build around this — how to deliberately move from poiesis to praxis, then to phronesis. It’s something I’m actively exploring, and that I’m considering formalizing. If this subject interests you, reply to this newsletter — it’s exactly the kind of signal that tells me where to go.

Sources: Frankl, V. (1946). Man’s Search for Meaning · Aristotle, Nicomachean Ethics (~350 BC) · Arendt, H. (1958). The Human Condition · Frey & Osborne (2013). The Future of Employment, Oxford Martin School · Arntz, Gregory & Zierahn (2016). OECD · Autor, D. (2019). Work of the Past, Work of the Future, NBER · Brynjolfsson, Li & Raymond (2023). Generative AI at Work, NBER · Acemoglu & Restrepo (2019). Automation and New Tasks · WEF Future of Jobs Report (2025) · Klarna / CNBC (2025) · Amodei, D. / CNBC (January 2026) · Gartner (2025-2026) · Scientific Reports (2025). Relying on AI at work reduces meaning · AI identity threat, Springer (2022) · MIT Sloan Management Review (2023) · McKinsey Global Institute (2023)